UAI 2014 - Invited Speakers

UAI 2014 is pleased to announce the following invited speakers:

Banquet Speaker

Yann LeCun

Facebook Ai Research & Center for Data Science, NYU

The unreasonable effectiveness of deep learning

Keynote speaker

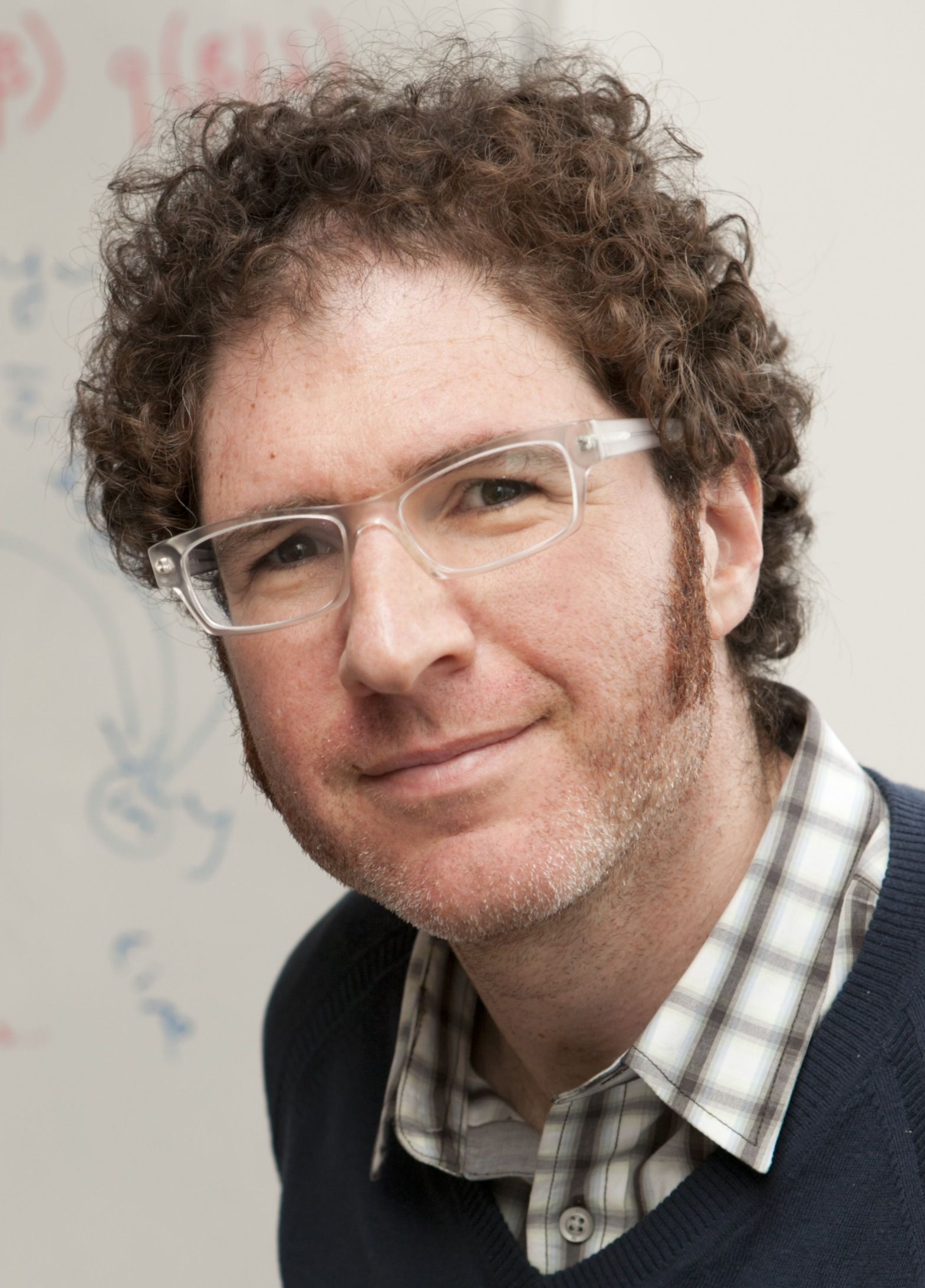

David M. Blei

Columbia University

Probabilistic Topic Models and User Behavior

Abstract

Probabilistic topic models provide a suite of tools for analyzing large document collections. Topic modeling algorithms discover the latent themes that underlie the documents and identify how each document exhibits those themes. Topic modeling can be used to help explore, summarize, and form predictions about documents. Topic modeling ideas have been adapted to many domains, including images, music, networks, genomics, and neuroscience.

Traditional topic modeling algorithms analyze a document collection and estimate its latent thematic structure. However, many collections contain an additional type of data: how people use the documents. For example, readers click on articles in a newspaper website, scientists place articles in their personal libraries, and lawmakers vote on a collection of bills. Behavior data is essential both for making predictions about users (such as for a recommendation system) and for understanding how a collection and its users are organized.

In this talk, I will review the basics of topic modeling and describe our recent research on collaborative topic models, models that simultaneously analyze a collection of texts and its corresponding user behavior. We studied collaborative topic models on 80,000 scientists' libraries from Mendeley and 100,000 users' click data from the arXiv. Collaborative topic models enable interpretable recommendation systems, capturing scientists' preferences and pointing them to articles of interest. Further, these models can organize the articles according to the discovered patterns of readership. For example, we can identify articles that are important within a field and articles that transcend disciplinary boundaries.

More broadly, topic modeling is a case study in the large field of applied probabilistic modeling. Finally, I will survey some recent advances in this field. I will show how modern probabilistic modeling gives data scientists a rich language for expressing statistical assumptions and scalable algorithms for uncovering hidden patterns in massive data.

Biographical details

David Blei is a Professor of Statistics and Computer Science at Columbia University. His research is in statistical machine learning, involving probabilistic topic models, Bayesian nonparametric methods, and approximate posterior inference. He works on a variety of applications, including text, images, music, social networks, user behavior, and scientific data.

David earned his Bachelor's degree in Computer Science and Mathematics from Brown University (1997) and his PhD in Computer Science from the University of California, Berkeley (2004). Before arriving to Columbia, he was an Associate Professor of Computer Science at Princeton University. He has received several awards for his research, including a Sloan Fellowship (2010), Office of Naval Research Young Investigator Award (2011), Presidential Early Career Award for Scientists and Engineers (2011), Blavatnik Faculty Award (2013), and ACM-Infosys Foundation Award (2013).

Keynote speaker

Craig Boutilier

University of Toronto

Addressing the Practical Challenges of Group Decision Support in a Data-rich World

Abstract

In a variety of social, business, political and economic contexts, decision making often involves groups of people or multiple stakeholders. Automated decision support for groups is considerably more difficult than for a single stakeholder since it requires aggregating the preferences of members of the group to determine optimal decisions. This brings with it the need to assess far more preference information, and often demands sophisticated mechanisms and optimization techniques to limit opportunities for manipulation.

These problems are widely studied with the field of computational social choice. In this talk, I'll outline some of the models and methods my group has been developing to address the informational and computational challenges needed to make decision support for groups more practical and widely applicable. This includes: robust optimization methods for handling partial preference infomation; interactive elicitation techniques to minimize the amount of preference information needed to compute optimal decisions; optimization methods for combinatorial group decisions; preference learning from choice data; and optimization and elicitation techniques that exploit probabilistic preference models derived from data. I'll conclude with a discussion of some emerging opportunities, and associated challenges, in group decision support.

Biographical details

Craig Boutilier is a Professor in the Department of Computer Science at the University of Toronto and co-founder of Granata Decision Systems. He received his Ph.D. in Computer Science from the University of Toronto in 1992, and worked as an Assistant and Associate Professor at the University of British Columbia from 1991 until his return to Toronto in 1999.

His current research efforts focus on various aspects of decision making under uncertainty: preference elicitation, mechanism design, game theory and multiagent decision processes, economic models, social choice, computational marketing and advertising, Markov decision processes, reinforcement learning and probabilistic inference.

Boutilier is currently Editor-in-Chief of the Journal of Artificial Intelligence Research (JAIR) and was Program Chair of the 16th Conference on Uncertainty in Artificial Intelligence (UAI-2000) and the 21st International Joint Conference on Artificial Intelligence (IJCAI-09). He is a Fellow of the Association for Computing Machinery (ACM) and the Association for the Advancement of Artificial Intelligence (AAAI).

Keynote speaker

Michael Littman

Brown UniveristyDepartment of Computer Science

Planning In The Context Of Model-based Reinforcement Learning

Abstract

Reinforcement learning (RL) is the problem of deriving goal-directed behavior from interaction with an environment. Model-based approaches to RL separate the task into two pieces---use ideas from machine learning to infer the dynamics of the environment in the form of a transition model, then use ideas from automated planning to decide what to do in this model. While, in principle, generic learning and planning approaches can be brought to bear, both subproblems are impacted by the fact that they are being applied in tandem. I will describe how my group has modified learning and planning approaches to work together in model-based RL with a focus on planning techniques that we have found particularly well suited in this setting.

Biographical details

Michael L. Littman's research in machine learning examines algorithms for decision making under uncertainty. He has earned multiple awards for teaching and his research has been recognized with three best-paper awards on the topics of meta-learning for computer crossword solving, complexity analysis of planning under uncertainty, and algorithms for efficient reinforcement learning. Littman has served on the editorial boards for the Journal of Machine Learning Research and the Journal of Artificial Intelligence Research. He was general chair of International Conference on Machine Learning 2013 and program chair of the Association for the Advancement of Artificial Intelligence Conference 2013.

Keynote speaker

Andrew Ng

Stanford University

The Online Revolution: Education for Everyone

Abstract

In 2011, Stanford University offered three online courses, which anyone in the world could enroll in and take for free. Together, these three courses had enrollments of around 350,000 students, making this one of the largest experiments in online education ever performed. Since the beginning of 2012, we have transitioned this effort into a new venture, Coursera, a social entrepreneurship company whose mission is to make high-quality education accessible to everyone by allowing the best universities to offer courses to everyone around the world, for free. Coursera classes provide a real course experience to students, including video content, interactive exercises with meaningful feedback, using both auto-grading and peer-grading, and a rich peer-to-peer interaction around the course materials. Currently, Coursera has 100 university and other partners, and over 5 million students enrolled in its more than 500 courses. These courses span a range of topics including computer science, business, medicine, science, humanities, social sciences, and more.

In this talk, I'll report on this far-reaching experiment in education, and why we believe this model can provide both an improved classroom experience for our on-campus students, via a flipped classroom model, as well as a meaningful learning experience for the millions of students around the world who would otherwise never have access to education of this quality.

Biographical details

Andrew Ng is a Co-founder of Coursera, and a Computer Science faculty member at Stanford. In 2011, he led the development of Stanford University's main MOOC (Massive Open Online Courses) platform, and also taught an online Machine Learning class that was offered to over 100,000 students, leading to the founding of Coursera. Ng's goal is to give everyone in the world access to a high quality education, for free. Today, Coursera partners with top universities to offer high quality, free online courses. With over 100 partners, over 500 courses, and 5 million students, Coursera is the largest MOOC platform in the world. Recent awards include being named to the Time 100 list of the most influential people in the world; to the CNN 10: Thinkers list; Fortune 40 under 40; and being named by Business Insider as one of the top 10 professors across Stanford University. Outside online education, Ng's research work is in machine learning; he is also the Director of the Stanford Artificial Intelligence Lab.